How to Optimize your Website Navigation without Guessing

- Scott Olivares

- Jan 8, 2020

- 9 min read

Bad navigation can really ruin what could have been a good experience. Once, when I was driving home to San Jose from Lake Tahoe, Waze sent me through a dark town and to a dead end at someone’s personal, and creepy-looking, ranch. I uninstalled it and boycotted it for like a year… I’ve given it a second chance since then.

I’m sure many can empathize with my experience. Modern GPSs can be great because they reduce the stress of trying to get somewhere, can get you there faster, and can even tell you where the cheapest gas is along your route! However, when it goes haywire, doesn’t tell you your next turn fast enough, or even sends you to the wrong place… it’s infuriating.

Well, your website’s navigation is GPS for your users, and you owe it to them and yourself to make sure it is easy to understand and gets them to where they want to go.

In this post, I’m going to share my approach for optimizing your website navigation in a methodical, data-driven way. I call the DIVE method: Discover, Ideate, Validate, Evolve.

If you follow this method, you will be able to do the following things:

Learn how effective or confusing your current navigation is

Come up with many ideas on how to improve it

Validate whether your ideas make your future navigation more effective

Create a list of future optimization ideas for your navigation

Like all efforts to improve something, we start with a research phase I call Discovery…

Discovery – Create navigation benchmark through tree testing

The first step in improving your navigation is to understand how effective it currently is at helping users find what they are looking for.

One way to do this is through Tree Testing.

For those not familiar with it, Tree Testing is a usability technique for evaluating the findability of topics on a website while taking all design aspects out of the equation (source: Wikipedia).

A good tree test asks the right participants to think of the right tasks and has them solve each task with one of the options in your website navigation.

There are various platforms you can use for Tree Testing. The one I turn to the most is Optimal Workshop, which offers Tree Testing and Card Sorting (more on this in the next section).

To get the best data from a Tree Test, follow these steps

Find the right audience – Generate data that represents reality by only recruiting participants that align with your target audience. You can do this by asking screener questions to include the right participants and exclude the wrong ones. Make sure you don’t have just anyone take your tree test because then your data won’t represent your target audience and won’t mean anything: garbage in, garbage out.

Figure out what the tasks are – Think of what the main objectives of your website are, then break down all the different tasks that lead to those objectives. These tasks will be the basis of how we measure the effectiveness of your navigation.

Write non-leading tasks/questions – Don’t give away the answer or lead people down the wrong way with the words you use in your tasks. Try to write tasks and questions that provide direction, but don’t influence the user toward taking a specific action. Leading questions are one of the most common ways to create a bias in your results, and biased results lead to making decisions based on bad data. For some help with writing non-leading questions, check out this page on SurveyMonkey’s website.

Create a Tree – Your Tree will be the hierarchy of your existing navigation, in text format. For example, in most cases the top branch of your tree would be your main navigation links, the secondary branch would be the sub-navigation options under each main navigation category, the third branch would be any tertiary navigation, etc.

Define your answers – Every task should have one or multiple answers, and each answer needs to be one of the options in your tree. If the task you have cannot be answered with one of the options in your tree, then there is a glaring gap in your tree test – either the task isn’t relevant to your website, or your navigation fails to help people with a very important website task.

If you take each of these steps to plan and build your tree test, then you are set up for success and ready to launch the research study.

When the tree test is complete, each critical task will have a score, and you will also have a score for the entire tree test. This is your benchmark; it indicates how effective your current navigation is at helping users find the solution to each task.

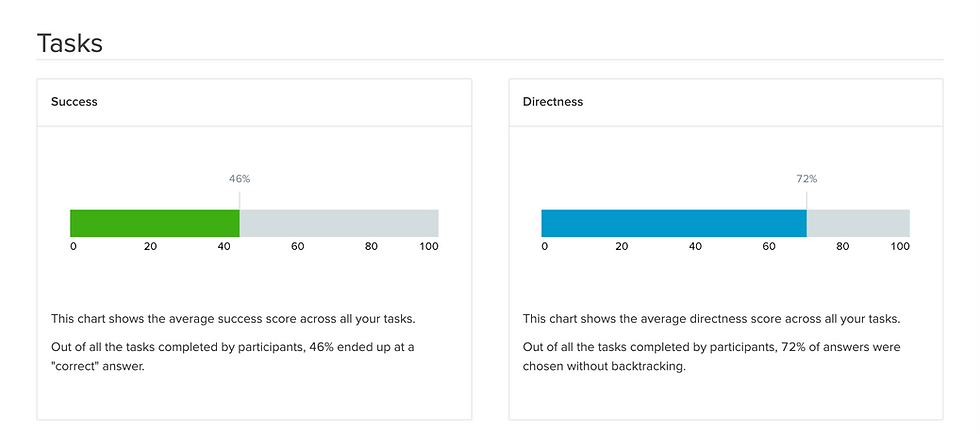

This is what a tree test score looks like in Optimal Workshop:

Overall

Task Breakdown Summary

Task Specific Data

Ideate – Get improvement ideas through card sorting

The best ideas are based on research, evidence, and a strong hypothesis.

After conducting a benchmark tree test, you should have some good evidence about the areas of your navigation that help users and those that are confusing.

Just by conducting a benchmark tree test, you’ll probably have a bunch of ideas on how to improve. However, gathering ideas from people in your target audience is a huge opportunity to really break through and think different. When you gather ideas from people that match your target audience (ideally outside of your company), you can mitigate any biases or blinders that exist from being too close to your website/product.

One way to generate great ideas from your audience about navigation, is Card Sorting. For those unfamiliar with this technique, here’s the definition from www.usability.gov:

“Card sorting is a method used to help design or evaluate the information architecture of a site. In a card sorting session, participants organize topics into categories that make sense to them and they may also help you label these groups. To conduct a card sort, you can use actual cards, pieces of paper, or one of several online card-sorting software tools.” Read more>

Again, I like to use Optimal Workshop for card sorting because it’s an easy platform to use, aggregates results, and even makes it easy to recruit participants. However, there are various options for card sorting, including getting the right people together in a conference room and having them organize stickies in groups that make sense to them.

Here’s what card sorting looks like inside of Optimal Workshop:

Similar to tree testing, it’s important to have the right card sorting participants to generate good data. Remember, garbage in, garbage out.

Cards and Categories

There are two elements to card sorting: cards and categories.

Cards - The cards in a card sort contain the topics that need to be organized into groups. The topics in a navigation card sort should contain all the various navigation options that exist on your website.

Categories - The categories in a card sort are the topics under which your participants will group the cards. The categories will usually map to one of your primary navigation options that contain sub navigation options underneath them.

Types of Card Sorting Techniques

There are a few card sorting techniques: open, closed, and hybrid. Depending on the type of research that you’re doing, one technique may yield better results than another.

I have listed them below from my least favorite to my most favorite for the purpose of improving navigation.

Least favorite option for navigation purposes: open card sort – In an open card sort, you provide no categories. Instead the participants are required to create the categories they think make the most sense, then group the cards according to those categories. I generally avoid open card sorts for navigation ideation because it is too wide open, and you risk not being able to detect any patterns. If you have participants that are super motivated and care about providing you with their best work, then it COULD work. However, if you have some participants that aren’t very focused, or begin getting tired, you can end up with a lot of junk.

Okay option for navigation purposes: closed card sort – In a closed card sort, you provide participants with all the categories they are allowed to use. Then they must group the cards into only those categories. Closed card sorts work okay, and you will be able to detect trends about your existing navigation. However, they provide no room for participants to provide new category ideas. Where open card sorts are too wide open, closed card sorts may be too restrictive.

Favorite option for navigation purposes: hybrid card sort – In a hybrid card sort, you provide participants with the categories, but they have the option to create new categories that they think make more sense. This is my favorite approach for navigation because you provide participants with most or all of the category options, but if one of the options doesn’t make sense to them, they are able to provide you with alternatives. This creates a nice blend of guidance and freedom to create, which allows you to detect patterns and gain new category ideas.

Create Your New Navigation

After completing a benchmark tree test and at least one card sort, you should be overflowing with ideas on how to make your navigation better. It’s now time to take what you’ve learned from all your participants, combine that with your knowledge of the business, and create a new navigation that you think is going to be more helpful than the benchmark.

Validate – Compare new navigation vs benchmark through tree testing

Now that you have a new navigation that you think will work better than the benchmark (i.e. your existing navigation), it’s time to prove it by conducting a validation tree test.

What is a validation tree test?

The validation tree test is very similar to the benchmark tree test. The only difference is that the tree will be different. In the benchmark, the tree was built from your existing navigation. In the validation, the tree will be built from the new navigation that you think will be better.

The screener questions, tasks and number of participants should remain the same in order to get a fair comparison.

By doing this, you are essentially creating an A/B test for your navigation’s IA. By keeping the screener questions, tasks and number of participants the same, but changing the tree, you are isolating the IA and can attribute any changes in the score to the IA.

What to do if the benchmark has a higher score

So, what happens if the benchmark beats your new navigation? Well, that’s okay because every step backward is a step forward!

We’re going to be wrong sometimes, and those situations provide us with learnings on what not to do and ideas for what we could do instead. That is what’s great about the scientific, methodical process we’re following. We are testing our assumption, and if we realize that we are moving in the wrong direction, we can learn and tweak, and re-validate.

With every Tree Test you run, whether you beat the benchmark or lose to it, you need to analyze the results, develop insights, and come up with more improvement ideas. If your new navigation had a lower score than the benchmark, analyze the data and try to figure out why, develop ideas you think will help, and tweak your new navigation accordingly.

Keep following this process until you finally beat the benchmark. If you stick to this test and learn process, you WILL learn your way to a better experience.

What to do when you beat the benchmark

If you beat the benchmark, then congratulations! However, your work is not done.

You should still take the time to analyze the results of the validation tree test and try to develop ideas on how to keep improving it. You will almost certainly find some. Then, put these ideas on your optimization roadmap so you can continue trying to improve your new navigation later.

Track the Differences

Optimal Workshop is great, but it doesn’t yet provide an easy way to compare tree test results across various studies.

But we can solve this easily by opening up a spreadsheet and creating a table with the following columns:

Then, in the last row, add the overall score for each tree test.

It should look something like this:

This will allow you to evaluate each navigation version against the others and pinpoint differences.

Tasks are not created equally

You’ll notice in the example above that most tasks in the second validation had a higher score than the benchmark, but a couple of tasks had a lower score. For example, task #2 had a 23% success rate in the benchmark, but a 7% success rate in the second validation.

This may or may not be acceptable to you; it all depends on how important task #2 is relative to the rest of the tasks.

If keto products make up 80% of your website’s revenue, then hurting the score for that task would not be acceptable, and you would need to find a way to increase that score before moving forward. However, if keto products only represent 5% of your revenue, but coffee and bundles represent most of your business, then you should move forward with the new navigation and make it a point to keep trying to improve the findability of keto products without hurting the findability of coffee or bundles.

Evolve – A/B test, build, and continue learning

Now that you’ve found a new navigation that has a higher tree test score than your benchmark, I recommend that you launch an A/B test on your live website with your new navigation.

The methodical process we’ve followed so far will greatly increase the chances that you’ve found a more intuitive navigation, but until now, all our effort has been to come up with the best IA. However, navigation is more than just IA. The IA needs to be evaluated in the context of other elements such as page design, hover behavior, page load speed, etc. An A/B test on your live website is the best way to truly know if you’ve found a better navigation.

And finally, even after you A/B test and truly improve your business through an improved navigation, you should continue testing all those ideas you had when you were analyzing your final tree test.

Just because you improved from where you were doesn’t mean it’s the best you can do.

Good luck! Let me know if you have any questions… I’m happy to help.

Comments